People in this thread have very interesting ideas of what “shit hardware” is

My cluster ranges from 4th gen to 8th gen Intel stuff. 8th gen is the newest I’ve ever had (until I built a 5800X3D PC).

I’ve seen people claiming 9th gen is “ancient”. Like…ok moneybags.

My 9th gen intel is still not the bottleneck of my 120hz 4K/AI rig, not by a longshot.

Yep any core i3 is fine even for desktop given an SSD and enough RAM. Once you delve into the core2 era, you start having problems because it lacks the compression and encryption instructions necessary for the day to day smoothness. In a server you might get away with core 2 duo as long as you don’t use full disk encryption and get an SSD or at least use ram for caching. Though that would be kinda a bizarre setup on a computer with 512 MB of ram.

I was for a while. Hosted a LOT of stuff on an i5-4690K overclocked to hell and back. It did its job great until I replaced it.

Now my servers don’t lag anymore.

EDIT: CPU usage was almost always at max. I was just redlining that thing for ~3 years. Cooling was a beefy Noctua air cooler so it stayed at ~60 C. An absolute power house.

4690k was solid! Mine is retired, though. Now I selfhost on ARM

I retired mine with a 12600K and I’m not sure what to do with it now.

4 gigs of RAM is enough to host many singular projects - your own backup server or VPN for instance. It’s only if you want to do many things simultaneously that things get slow.

deleted by creator

Maybe not shit, but exotic at that time, year 2012.

The first Raspberry Pi, model B 512 MB RAM, with an external 40 GB 3.5" HDD connected to USB 2.0.It was running ARM Arch BTW.

Next, cheap, second hand mini desktop Asus Eee Box.

32 bit Intel Atom like N270, max. 1 GB RAM DDR2 I think.

Real metal under the plastic shell.

Could even run without active cooling (I broke a fan connector).What’re you hosting on them?

Mainly telemetry, like temperature inside, outside.

Script to read a data and push it into a RRD, later PostreSQL.

ligthttpd to serve static content, later PHP.Once it served as a bridge, between LAN and LTE USB modem.

I have one of these that I use for Pi-hole. I bought it as soon as they were available. Didn’t realise it was 2012, seemed earlier than that.

This was my media server and kodi player for like 3 years…still have my Pi 1 lying around. Now I have a shitty Chinese desktop I built this year with i5 3rd. Gen with 8gb ram

I’m sure a lot of people’s self hosting journey started on junk hardware… “try it out”, followed by “oh this is cool” followed by “omg I could do this, that and that” followed by dumping that hand-me-down garbage hardware you were using for something new and shiny specifically for the server.

My unRAID journey was this exactly. I now have a 12 hot/swap bay rack mounted case, with a Ryzan 9 multi core, ECC ram, but it started out with my ‘old’ PC with a few old/small HDDs

Maybe a more reasonable question: Is there anyone here self-hosting on non-shit hardware? 😅

I’m happy with my little N100

Rehabilitated HP z440 workstation, checking in! Popped in a used $20 e5-2620v4 xeon CPU and 64gb of RAM and it sails for my use cases. TrueNAS as the base OS and a TalOS k8’s cluster in a VM to handle apps. Old but gold.

10400F running my NAS/Plex server and raspberry pi 5 running PiHole

I have pi-hole on my Mac mini using docker but I stopped using it, it makes some things super laggy to load

Interesting, I haven’t had any issues with things loading with mine, maybe it’s your adlists causing issues? Try disabling some, there might be false positives in there giving you issues

I tried the default ones

2 GB RAM rasp pi 4 :))

Me using Threadripper 7960X and R5 6600H for my servers: 🤭

You can pry my gen8 hp microserver from my cold, dead hands.

It’s getting up there in years but I’m running a Dell T5610 with 128GB RAM. Once I start my new job I might upgrade cause it’s having issues running my MC server.

It’s not top of the line, but my Ryzen 1700 is way overkill for my NAS. I’ll probably add a build server, not because I need it, but because I can.

Enterprise level hardware costs a lot, is noisy and needs a dedicated server room, old laptops cost nothing.

I got a 1U rack server for free from a local business that was upgrading their entire fleet. Would’ve been e-waste otherwise, so they were happy to dump it off on me. I was excited to experiment with it.

Until I got it home and found out it was as loud as a vacuum cleaner with all those fans. Oh, god no…

I was living with my parents at the time, and they had a basement I could stick it in where its noise pollution was minimal. I mounted it up to a LackRack.

Since moving out to a 1 bedroom apartment, I haven’t booted it. It’s just a 70 pound coffee table now. :/

Got all my docker containers on an i3-4130T. It’s fine.

I had quite a few docker containers going on a Raspberry Pi 4. Worked fine. Though it did have 8GB of RAM to be fair

I had a old Acer SFF desktop machine (circa 2009) with an AMD Athlon II 435 X3 (equivalent to the Intel Core i3-560) with a 95W TDP, 4 GB of DDR2 RAM, and 2 1TB hard drives running in RAID 0 (both HDDs had over 30k hours by the time I put it in). The clunker consumed 50W at idle. I planned on running it into the ground so I could finally send it off to a computer recycler without guilt.

I thought it was nearing death anyways, since the power button only worked if the computer was flipped upside down. I have no idea why this was the case, the computer would keep running normally afterwards once turned right side up.

The thing would not die. I used it as a dummy machine to run one-off scripts I wrote, a seedbox that would seed new Linux ISOs as it was released (genuinely, it was RAID0 and I wouldn’t have downloaded anything useful), a Tor Relay and at one point, a script to just endlessly download Linux ISOs overnight to measure bandwidth over the Chinanet backbone.

It was a terrible machine by 2023, but I found I used it the most because it was my playground for all the dumb things that I wouldn’t subject my regular home production environments to. Finally recycled it last year, after 5 years of use, when it became apparent it wasn’t going to die and far better USFF 1L Tiny PC machines (i5-6500T CPUs) were going on eBay for $60. The power usage and wasted heat of an ancient 95W TDP CPU just couldn’t justify its continued operation.

Always wanted am x3, just such an oddball thing, I love this. I had a 965 x4

The X3 CPUs were essentially quad cores where one of the cores failed a quality control check. Using a higher end Mobo, it was possible to unlock the fourth core with varying results. This was a cheap consumer Acer prebuilt though, so I didn’t have that option.

You can do quite a bit with 4GB RAM. A lot of people use VPSes with 4GB (or less) RAM for web hosting, small database servers, backups, etc. Big providers like DigitalOcean tend to have 1GB RAM in their lowest plans.

your hardware ain’t shit until it’s a first gen core2duo in a random Dell office PC and 2gb of memory that you specifically only use just because it’s a cheaper way to get x86 when you can’t use your raspberry pi.

Also they lie most of the time and it may technically run fine on more memory, especially if it’s older when dimm capacities were a lot lower than they can be now. It just won’t be “supported”.

I run a local LLM on my gaming computer thats like a decade old now with an old 1070ti 8GB VRAM card. It does a good job running mistral small 22B at 3t/s which I think is pretty good. But any tech enthusiast into LLMs look at those numbers and probably wonder how I can stand such a slow token speed. I look at their multi card data center racks with 5x 4090s and wonder how the hell they can afford it.

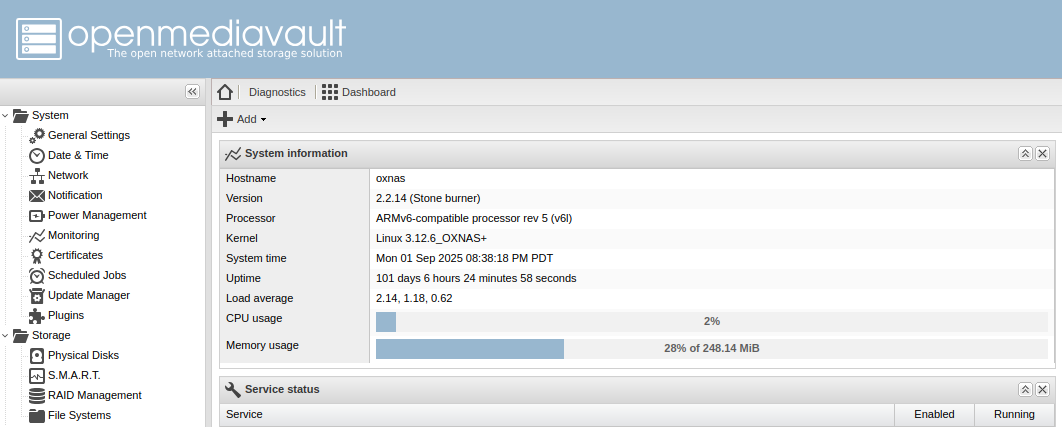

Does this count ARMv6 256MB RAM running OpenMediaVault…hmm I have to fix my clock. LOL

3x Intel NUC 6th gen i5 (2 cores) 32gb RAM. Proxmox cluster with ceph.

I just ignored the limitation and tried with a single sodim of 32gb once (out of a laptop) and it worked fine, but just backed to 2x16gb dimms since the limit was still 2core of CPU. Lol.

Running that cluster 7 or so years now since I bought them new.

I suggest only running off shit tier since three nodes gives redundancy and enough performance. I’ve run entire proof of concepts for clients off them. Dual domain controllers and FC Rd gateway broker session hosts fxlogic etc. Back when Ms only just bought that tech. Meanwhile my home “ARR” just plugs on in docker containers. Even my opnsense router is virtual running on them. Just get a proper managed switch and take in the internet onto a vlan into the guest vm on a separate virtual NIC.

Point is, it’s still capable today.

How is ceph working out for you btw? I’m looking into distributed storage solutions rn. My usecase is to have a single unified filesystem/index, but to store the contents of the files on different machines, possibly with redundancy. In particular, I want to be able to upload some files to the cluster and be able to see them (the directory structure and filenames) even when the underlying machine storing their content goes offline. Is that a valid usecase for ceph?

I’m far from an expert sorry, but my experience is so far so good (literally wizard configured in proxmox set and forget) even during a single disk lost. Performance for vm disks was great.

I can’t see why regular file would be any different.

I have 3 disks, one on each host, with ceph handling 2 copies (tolerant to 1 disk loss) distributed across them. That’s practically what I think you’re after.

I’m not sure about seeing the file system while all the hosts are all offline, but if you’ve got any one system with a valid copy online you should be able to see. I do. But my emphasis is generally get the host back online.

I’m not 100% sure what you’re trying to do but a mix of ceph as storage remote plus something like syncthing on a endpoint to send stuff to it might work? Syncthing might just work without ceph.

I also run zfs on an 8 disk nas that’s my primary storage with shares for my docker to send stuff, and media server to get it off. That’s just truenas scale. That way it handles data similarly. Zfs is also very good, but until scale came out, it wasn’t really possible to have the “add a compute node to expand your storage pool” which is how I want my vm hosts. Zfs scale looks way harder than ceph.

Not sure if any of that is helpful for your case but I recommend trying something if you’ve got spare hardware, and see how it goes on dummy data, then blow it away try something else. See how it acts when you take a machine offline. When you know what you want, do a final blow away and implement it with the way you learned to do it best.

Not sure if any of that is helpful for your case but I recommend trying something if you’ve got spare hardware, and see how it goes on dummy data, then blow it away try something else.

This is good advice, thanks! Pretty much what I’m doing right now. Already tried it with IPFS, and found that it didn’t meet my needs. Currently setting up a tahoe-lafs grid to see how it works. Will try out ceph after this.

I started my self hosting journey on a Dell all-in-one PC with 4 GB RAM, 500 GB hard drive, and Intel Pentium, running Proxmox, Nextcloud, and I think Home Assistant. I upgraded it eventually, now I’m on a build with Ryzen 3600, 32 GB RAM, 2 TB SSD, and 4x4 TB HDD

My first server was a single-core Pentium - maybe even 486 - desktop I got from university surplus. That started a train of upgrading my server to the old desktop every 5-or-so years, which meant the server was typically 5-10 years old. The last system was pretty power-hungry, though, so the latest upgrade was an N100/16 GB/120 GB system SSD.

I have hopes that the N100 will last 10 years, but I’m at the point where it wouldn’t be awful to add a low-cost, low-power computer to my tech upgrade cycle. Old hardware is definitely a great way to start a self-hosting journey.