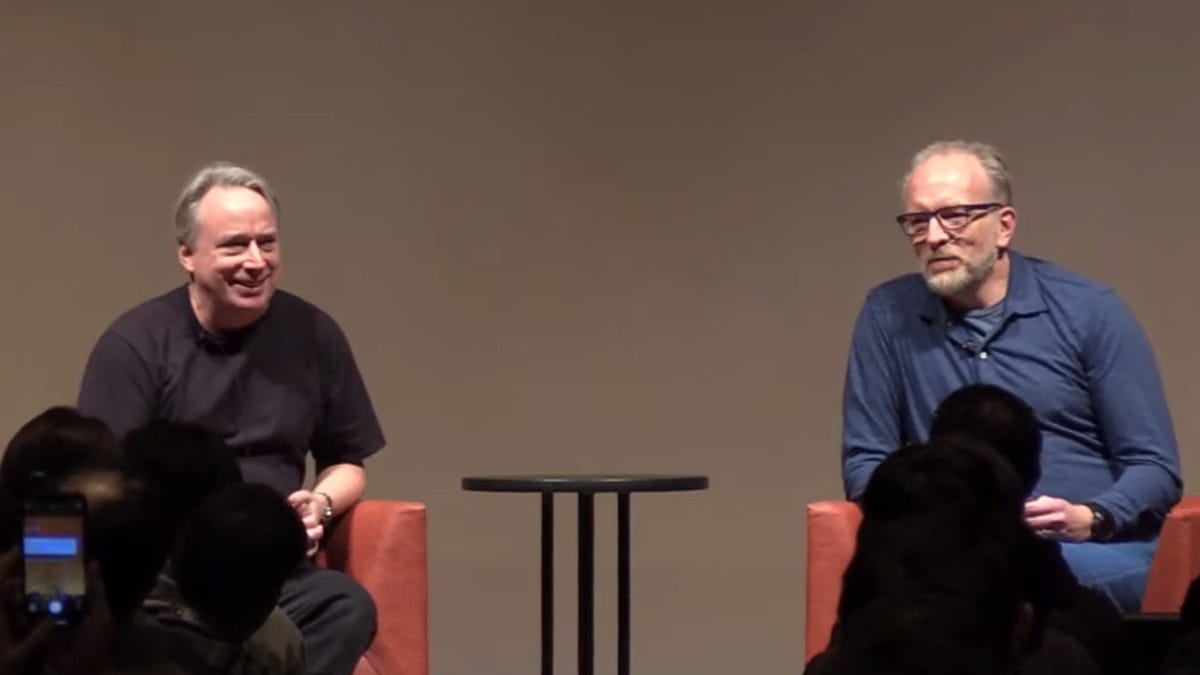

At Open Source Summit Japan, Linux and Git creator Linus Torvalds talked about Rust in Linux, Linux maintainer fatigue, and AI’s future role in Linux and open-source development.

Looking ahead, Hohndel said, we must talk about "artificial intelligence large language models (LLM). I typically say artificial intelligence is autocorrect on steroids. Because all a large language model does is it predicts what’s the most likely next word that you’re going to use, and then it extrapolates from there, so not really very intelligent, but obviously, the impact that it has on our lives and the reality we live in is significant.

Exactly.

It is very intelligent though.

It’s not simple to come up with coherent statements on such a wide variety of tasks.

It’s not just stringing random words together like predictive text. It understands context in a way that is very complex.

It is more knowledgeable than the average person by a huge amount.

For example I asked it to write songs about squidmas, an imaginary holiday I made up to irritate my children. It was able to rewrite Christmas songs but with a squid theme. That’s way more complex than predictive text.

You’re conferring a level of agency where none exists.

It appears to “understand.” It appears to be “knowledgeable. “

But LLMs do neither of those things.

Take this note from an OpenAI dev:

It’s that these models have leveraged so much data they’ve been able to map out relationships between words (or images) in way as to be able to generate what seem like new versions of those things.

I grant you that an LLM has more base level knowledge than any one human, but again this is thanks to terrifyingly large dataset and a design that means it can access this data reasonably reliably.

But it is still a prediction model. It just has more context, better design and (most importantly) data to make predictions at a level never before seen.

If you’ve ever had a chance to play with a model at level where you can control some of its basic parameters it offers a glimpse into just how much of a prediction machine it can be.

My favourite game for a while was to give midjourney a wildly vague prompt but crank the chaos up to 100 (literally the chaos flag at the highest level) to see what kind of wild connections exist but are being filtered out during “normal” use.

The same with the GPT-3.5 API in the “early days” - you could return multiple versions of the response and see the sausage being made to a very small degree.

It doesn’t take away from the sense of magic using these tools. It just helps frame what’s going on under the hood.

Given it’s an artificial intelligence it stands to reason its understanding and knowledge are artificial.

I don’t think there’s any relevance pointing that out anymore. No one thinks it’s conscious or a general AI.

I also don’t see how it’s massively different to our ability to parse and output text tbh.

it’s different to our ability because we actually know what words are, we know they refer to things.

All an LLM sees is tokens, it has absolutely no concept of what langauge actually is or what things mean, it’s literally just “this number seems to occur after these numbers”.

That’s kind of a given though. It’s a large language model, so of course its “understanding” can only be in terms of language. In a way, words are its only sense (input), and only way to interact with the world (output). The mechanism isn’t really important, imo, since we could reduce our own understanding to chemical reactions.

Homo sapiens have many more dimensions of awareness, dozens maybe including sight, hearing, time, pressure, acceleration, etc., and we’ve been collecting data from them all 24/7 since embryo, plus instinct (pre-baked weights) from millions of years of evolution. We know that people born without a sense, let’s say vision cannot conceptualize visually, even when their sight is restored for a time. I remember reading awhile back that a person born blind had their vision fixed, but they didn’t know what “pointy” looked like. They couldn’t know. Do they have a lower quality understanding of a word?

My point being, I don’t think it’s fair to objectively compare understanding between a person and a model without a testable definition of that word. Imo, and feel free to disagree, understanding is no different than merely knowing, it’s just implied that the knowledge is deeper, across multiple dimensions of awareness, including subconscious awareness of our own hormones.

I think that is overly simplistic. Embeddings used for LLMs do definitely include a concept of what things mean and the relationship of things to other things.

E.g., compare the embeddings of Paris, Athens, and London to other cities and they will have small cosine distance between them. Compare France, Greece, and England and same. Then very interestingly, look at Paris - France, Athens - Greece, London - England and you’ll find the resulting vectors all align (fundamentally the vector operation seems to account for the relationship “is the capital of”). Then go a step further, compare those vector to Paris - US, Athens - US, London - Canada. You’ll see the previous set are not aligned with these nearly as much but these are aligned with each other (relationship being something like “is a smaller city in this countrry, named after a famous city in some other country”)

The way attention works there is a whole bunch of semantic meaning baked into embeddings, and by comparing embeddings you can get to pragmatic meaning as well.

It’s not intelligent because it’s not thinking.

At least my definition of intelligence is thinking. Otherwise a simple pattern matching algorithm like a regexp is also intelligent, or a sorting algorithm that puts things in the right order.

But I agree it’s very efficient and has more data than any single person ever could. It’s a computer, they are great at storing and processing information.

While I mostly agree, I’d like to point out that GOFAI (good old fashioned AI) exists, and at its core it is basically just pathfinding like a* or something similar. And we still call that AI, because it “intelligently” finds a path quickly.

So my main point is that I agree that it isn’t magic or sapient or anything, but in a sense it is definitely intelligent.

I’ve heard the argument that we don’t really have a good definition of thinking or intelligence and if it can complete a task or do things…what does it matter if it’s “thinking” or not if the outcome is the same.

I think the defenders of human intellect are heralding our language and thinking to be a much higher standard than for MOST people they are.

A chess champion might be executing critical thinking beyond normal comprehension but I’d say a lot of my interactions with others, my daily experience is just pattern matching the next thing to say or ask.

I think this type of anthropocentrism extends to chess too actually. I’m not an expert on the subject, but I’ve heard that chess AIs are finding success doing unintuitive things like pushing a and h file pawns in openings. If, 10 years ago, some chess grandmaster was doing the same thing and finding success, I imagine they would have been seen as creative, maybe even groundbreaking.

I think the average person under-rates the sophistication of AI. Maybe as a response to the AI hype. Maybe it’s because we’re scared of AI, and it’s comforting to believe that it’s operations are trivial. I see irrationality and anger cropping up in discussions of AI that I think stem from a fundamental fear of its transformative power.

Yes it’s going to transform everything. It’s about the same as the transformation from typewriter to computer for society. But I still don’t think any machine that predicts the next word is intelligent. However, this is only the beginning. We are not going to be able to keep up with AI soon, and it will work around the clock to get better and better.

We will have those high tech societies from the movies where robots are everywhere and people are quite sad.

You say that however we might have stumbled on the groundwork for a GI. Because language is core to our evolutionary advancement. We needed language to build the mental constructs that then enabled logical work.

Imagine if an LLM was able to coordinate the usage of these “logical” AI’s like Deep mind etc.

ChatGPT already enabled Internet search and it’s better than if I asked someone to Google something for me.

You could say it’s artificially intelligent haha

Lol get a load of this fool

Bro the AI neural networks have been shown to be building internal world models to be able to do what they do. How is that not thinking?

I am so sick of this anthrocentrism, as if we are special because we are humans. The computers are now doing the same mathematical processes our brains are doing. The LLMs can be compared to a small subsection of our brains. String enough neural network based AIs together with different tasks and youll get sentience.

Sentience isnt required for “thinking” to happen, thinking is one of the building blocks for sentience.

Bro

I would have liked for Linus to maintain his angry-man-finger-thrusting self against evil corporates like Nvidia. I suppose I’m asking for too much, but his mild-mannerisms towards developers is a welcome change. Towards such corporates though, not so much. I would have liked some more motivated cursing against Intel and Nvidia and IBM. Oh well.

Other than that (which is a minor gripe from me at the most), touching message from Linus. Indeed, the maintainers are graying, and the current generation isn’t that interested in kernel programming. I’m sure there will be talent around (as long as the big companies need Linux to run their servers, I’m sure someone will turn up), but someone to rise to the helm with a fiery approach to openness is very important to my heart. I don’t think we will ever see another Linus in our lifetime, and I will personally grieve the day Linus and his core set of maintainers pass away.

I am not a programmer, and the best I can do is provide some funding to people who can/would engage directly with the kernel. But if the situation becomes so dire, I too will get my hands dirty, if nothing but to help the cause. Long live FOSS!

Linus was himself a major contributor to making people steer well clear of wanting to work on the Linux kernel. I didn’t need that kind of abuse in my life.

So while he is identifying a problem, it’s a bit like a recovering arsonist homeowner bemoaning the scorch marks on his house.

As I said, his change in behaviour towards fellow developers is a welcome change. There’s no doubt about that.

I just wish he would continue to rage against companies up to no good, especially for FOSS. I never want him to get mild with Nvidia, and I want him to praise AMD a bit (they deserve it, and his opinion holds value).

deleted by creator

So you want a fool to validate your insipid worldviews.

After he got a handle on it, Torvalds returned to the kernel. He’s been much more mild-tempered since then. As he mentioned in Tokyo, he won’t be “giving some company the finger. I learned my lesson.”

This is probably a good thing.

Looking ahead, Hohndel said, we must talk about “artificial intelligence large language models (LLM). I typically say artificial intelligence is autocorrect on steroids. Because all a large language model does is it predicts what’s the most likely next word that you’re going to use, and then it extrapolates from there, so not really very intelligent, but obviously, the impact that it has on our lives and the reality we live in is significant. Do you think we will see LLM written code that is submitted to you?”

Torvalds replied, “I’m convinced it’s gonna happen. And it may well be happening already, maybe on a smaller scale where people use it more to help write code.” But, unlike many people, Torvalds isn’t too worried about AI. “It’s clearly something where automation has always helped people write code. This is not anything new at all.”

Indeed, Torvalds hopes that AI might really help by being able “to find the obvious stupid bugs because a lot of the bugs I see are not subtle bugs. Many of them are just stupid bugs, and you don’t need any kind of higher intelligence to find them. But having tools that warn more subtle cases where, for example, it may just say ‘this pattern does not look like the regular pattern. Are you sure this is what you need?’ And the answer may be ‘No, that was not at all what I meant. You found an obvious bag. Thank you very much.’ We actually need autocorrects on steroids. I see AI as a tool that can help us be better at what we do.”

But, “What about hallucinations?,” asked Hohndel. Torvalds, who will never stop being a little snarky, said, “I see the bugs that happen without AI every day. So that’s why I’m not so worried. I think we’re doing just fine at making mistakes on our own.”

There were no questions about whether maintainers would start utilizing LLMs. The questions were focused on how maintainers would respond to LLM-generated (or -assisted) patches being submitted to them. This attitude seems perfectly reasonable to me, but it would have been more interesting to ask questions about whether maintainers would start using LLMs in their work. Torvalds might have responded with a more interesting answer.

deleted by creator

It was interesting to hear your perspective!

I’m a newbie programmer (and have been for quite a few years), but I’ve recently started trying to build useful programs. They’re small ones (under 1000 lines of code), but they accomplish the general task well enough. I’m also really busy, so as much as I like learning this stuff, I don’t have a lot of time to dedicate to it. The first program, which was 300 lines of code, took me about a week to build. I did it all myself in Python. It was a really good learning experience. I learned everything from how to read technical specifications to how to package the program for others to easily install.

The second program I built was about 500 lines of code, a little smaller in scope, and prototyped entirely in ChatGPT. I needed to get this done in a weekend, and so I got it done in 6 hours. It used SQLite and a lot of database queries that I didn’t know much about before starting the project, which surely would have taken hours to research. I spent about 4 hours fixing the things ChatGPT screwed up myself. I think I still learned a lot from the project, though I obviously would have learned more if I had to do it myself. One thing I asked it to do was to generate a man page, because I don’t know Groff. I was able to improve it afterward by glancing at the Groff docs, and I’m pretty happy with it. I still have yet to write a man page for the first program, despite wanting to do it over a year ago.

I was not particularly concerned about my programs being used as training data because they used a free license anyway. LLMs seem great for doing the work you don’t want to do, or don’t want to do right now. In a completely unrelated example, I sometimes ask ChatGPT to generate names for countries/continents because I really don’t care that much about that stuff in my story. The ones it comes up with are a lot better than any half-assed stuff I could have thought of, which probably says more about me than anything else.

On the other hand, I really don’t like how LLMs seem to be mainly controlled by large corporations. Most don’t even meet the open source definition, but even if they did, they’re not something a much smaller business can run. I almost want to reject LLMs for that reason on principle. I think we’re also likely to see a dramatic increase in pricing and

enshittificationin the next few years, once the excitement dies down. I want to avoid becoming dependent on this stuff, so I don’t use it much.I think LLMs would be great for automating a lot of the junk work away, as you say. The problem I see is they aren’t reliable, and reliability is a crucial aspect of automation. You never really know what you’re going to get out of an LLM. Despite that, they’ll probably save you time anyway.

I’m no expert, but neither is most of the workforce (although kernel work is, again, much more in the expert realm).

I think experts are the ones who would benefit from LLMs the most, despite LLMs consistently producing average work in my experience. They know enough to tell when it’s wrong, and they’re not so close to the code that they miss the obvious. For years, translators have been using machine translation tools to speed up their work, basically relegating them to being translation checkers. Of course, you’d probably see a lot of this with companies that contract translators at pitiful rates per word who need to work really hard to get decent pay. Which means the company now expects everyone to perform at that level, which means everyone needs to use machine translation tools to keep up, which means efficiency is prioritized over quality.

This is a very different scenario to kernel work. Translation has kind of been like that for a while from what I know, so LLMs are just the latest thing to exacerbate the issues.

I’m still pretty undecided on where I fall on the issue of LLMs. Ugh, nothing in life can ever be simple. Sorry for jumping all over the place, lol. That’s why I would have been interested in Linus Torvalds’ opinion :)

deleted by creator

deleted by creator

That said, Torvalds continued, “Rust has not really shown itself as the next great big thing. But I think during next year, we’ll actually be starting to integrate drivers and some even major subsystems that are starting to use it actively. So it’s one of those things that is going to take years before it’s a big part of the kernel. But it’s certainly shaping up to be one of those.”

I don’t know about that, languages which are based on standards (c++ , javascript, c) seem to have much better enduring popularity, i don’t want to see rust becoming less and less popular which will lead to less available developers (like what is happening with ruby).

I assumed that he was talking about the fact the the languages he listed have a lot of syntax in common with each other, and with a few other languages. I could be wrong though

I too can’t wait to compile the kernel (and its modules) on cargo.

I’ll prep my supercomputer.

Speaking as a non Rustacean, I’m pretty okay with it becoming more integrated.

It’s safe, performant, and isn’t any more difficult to pick up than C++. C has a weird aura about it that makes it seem intimidating despite the fact that it is the simplest language (macros notwithstanding) that I’ve ever used.

Based on Google’s recent track record of mind-boggling incompetence on all fronts, I want Go kept as far away from core functionality as humanly possible. This leaves either adding more cruft to an already ungainly C++, continuing to use Boost (another Google product) with C, or to pivot to a more modern language.

Agreed re: Google.

I dunno what the solution is. The world without Google is going to be a very different place. Do you think it’s even possible for them to turn things around?I think it would take a pretty major sea change for them. They technically split up into Alphabet, but I don’t know of a single person that actually uses that when describing them.

Even if they did change things around, and I would wager that the entrenched bureaucracy will make that impossible, their name is toxic to a lot of tech nerds. We may be a minority, but we talk and people listen. Even the non techies in my life know that they can’t maintain a simple messaging app, responded to (rightful!) concerns about data loss by locking the support threads, and has jacked up the price of YouTube on a yearly basis.

They’ve spectacularly failed at video game consoles, social media, banking/credit cards, IOT, messaging, video, and can’t even maintain a semblance of consistency in their office suite. At work I have three different ways to receive instant messages, and it’s a crapshoot as to which one a coworker will use.

And let’s not even get into how absolutely useless their search is now that everything has been gamed by SEO. Duckduckgo has been my default for years, but now it’s consistently returning better results than big G.

If they managed to correct course tomorrow, it would take multiple years for me to even begin to trust them again.

Yeah. Extremely unlikely and probably impossible.

It’s incredible how very much they have been able to fail but still continue operating.

Yeah… rust in the kernel scares me. Lol they are already worried about not having enough contributors, so…?

they = rust or the linux kernel?

The linux kernel doesn’t have enough contributors because it’s really difficult + the entire organisational side of it works on antique tech (IRC and mailinglists). The majority of the project itself is also in C which has a horrible developer experience: linting, documentation, debugging, code completion, and the lack of a proper IDE. The entire development cycle is convoluted. How do you seriously want to attract people to the project if everything looks like it’s still in a development cycle of the 90s?

If I were to discover a one-line bug in the kernel by reading it, actually testing the one-line fix would take me, as a newbie probably a solid week. Getting it into the kernel itself would probably take even longer.

The kernel is also known for Linus’ outbursts and being filled with neckbeard elitists. The project in my eyes has an image problem.

As for rust, if that’s what you meant, I’d be interested in knowing the source for not having enough contributors.

If that means an AI-assistant of sorts (like “that OS name that cannot be spoketh”) I’m game.

Will that make some users freak out and make it sound like its doomsday, even if they implement a on/off toggle to the AI assistant? Probably.

An AI assistant has nothing to do with the kernel and will never be in it.

It’s something for user space and can be done already. This is for the distro maintainers to decide.

What if AI will start contributing to the kernel end ends including AI there?

Then it’s over. We can tear it all down and start new.

The Linux Foundation and Kernel devs don’t really deal with the OS layer much. This is something that would need to be implemented at the desktop environment level; like GNOME or KDE. Neither LF nor Linus Torvalds has any say over that.